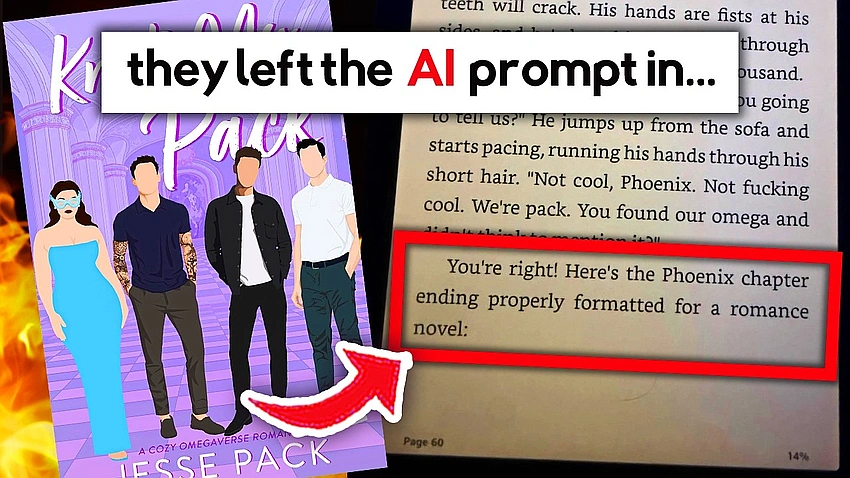

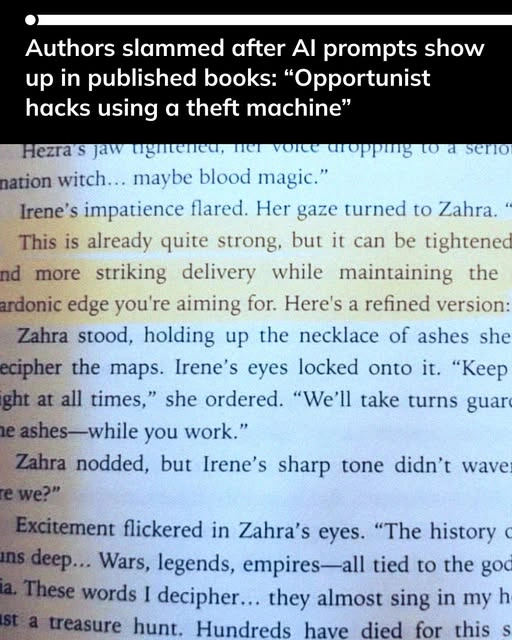

There is a specific kind of humiliation that belongs to the internet age: a reader opens an ebook, starts scrolling, and there it is, sitting inside the chapter like a coffee stain that refuses to come out: an AI prompt left in the final manuscript.

This is the moment the conversation turns hysterical. People start saying “literacy is dead” and “books are over”. But the question I keep coming back to is simpler, and frankly more uncomfortable: what kind of author writes a book like this, and expects to be taken seriously?

Because at the end of the day, using AI to write a book is not a harmless shortcut. It is a betrayal of art.

The scandal is not that AI exists. It is that the author chose it.

We need to be clear about something before the discourse tries to muddy it. Books have always involved collaboration. Editors cut, reshape, push. Copyeditors polish. Beta readers respond. Even ghostwriting, however ethically messy, still involves a human writer doing the work of writing.

Generative AI is fundamentally different.

It is not a “tool” in the same sense as an editor or an agent. It is a system built to imitate language at scale, to produce text by predicting what comes next, trained on a vast archive of human writing that it did not create. And when an author uses that mimicry to construct the voice, scenes, emotional beats, or structural movement of a novel, they are doing something worse than “cheating”. They are hollowing out the very premise of literature.

A novel is a record of human attention. The labour matters. The struggle matters. The time matters. It is what gives writing its charge: that another person sat down, wrestled with meaning, selected each sentence, and decided what to reveal and what to withhold.

When AI is used to generate that work, what is left is not a book in the proper sense. It is a manufactured object wearing the costume of a book.

And readers can feel that. That is why backlash erupts so violently when AI prompts are found inside published books. People are not merely annoyed. They are disgusted. F

Artistry is not “output”. It is intention

In humanities terms, literature is not reducible to “content”. It is not the same as a caption, a blog post, a product description, or the kind of frictionless corporate writing that exists to be skimmed. Literature demands intention and interiority. Even in its most commercial forms, it still rests on a kind of human commitment.

AI collapses that commitment into a workflow.

The defence usually goes like this: “People use tools all the time.” “It’s just like spellcheck.” “It’s no different from having an editor.”

But the comparison is dishonest. Spellcheck does not write your scenes. An editor does not generate your metaphors. AI, when used in book writing, is not tidying the work. It is replacing the work.

And it is not merely replacing labour, it is replacing risk. The risk of bad writing. The risk of failure. The risk of being ordinary and having to revise yourself out of it. That risk is not incidental. It is where style is born. It is where voice is discovered. It is where artistry happens.

The publishing world is already sick. AI makes it worse

Publishing has become increasingly obsessed with speed, trend cycles, and platform hype. “Rapid release” is framed as a strategy. Writers are pressured into being brands. Readers are trained into consuming novelty. AI slides into this environment like petrol on fire.

It makes it possible to mass-produce books with industrial efficiency, and the market incentives reward exactly that kind of behaviour. Business Insider reported on Tim Boucher, who used AI to create a huge volume of works quickly, and the story was framed as proof that AI makes infinite publishing possible. Whether one calls it experimentation or exploitation, the fact remains: AI turns literature into an assembly line.

And the saddest part is that it punishes actual writers, the ones who spend years learning how to make language sing.

AI writing is also ethically rotten

There is another reason this is unacceptable, and it goes beyond taste.

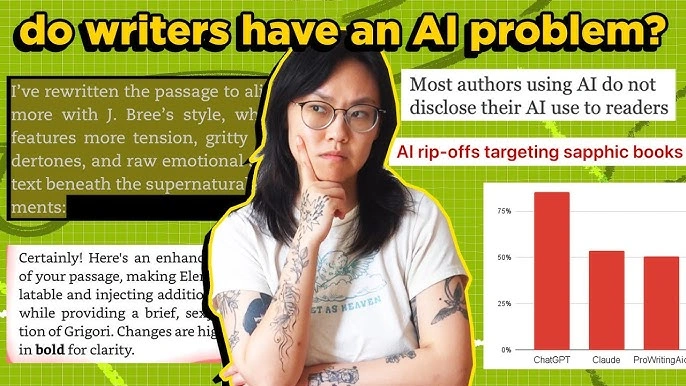

Generative AI is trained on enormous datasets, including copyrighted books, and the legal and ethical disputes around that are not abstract. Authors have been outspoken about their work being used without permission to train tools that may then be used to generate competing texts. The Guardian has covered multiple instances of author anger and protests relating to alleged use of pirated books in AI training datasets.

So when an author uses AI to “write” their book, they are participating in an ecosystem built on extraction. They are benefiting from a system that treats literature as raw material to be mined, not as labour that deserves compensation.

“But readers won’t notice” is exactly the point

What does it say about a writer who thinks the reader will not notice?

It says they do not respect the reader. It says they do not respect the craft. It says they see a book as something to shovel out, not something to make.

Literacy, properly understood, has always been about judgement. Taste. Discernment. It is the ability to sense when language is alive and when it is merely functioning.

And that is why, ironically, these scandals are clarifying. They reveal the difference between writing and text.

AI can generate text. It cannot produce literature. Using AI to write a book is unacceptable.

Not because it will “replace writers” in some dystopian sci-fi way. But because it shows a lack of true artistry. A refusal to do the work. A willingness to fake intimacy and sell it.

Literature is one of the last remaining places where slowness is still meaningful. Where attention is still sacred. Where human voice still matters. If someone wants to make algorithmic content, fine. The internet is overflowing with it. But if you are selling a book as a novel, as art, as your voice, and you use AI to generate that voice, you are not participating in literature. You are parasitising it. And readers are right to reject that.